I recently read an interesting paper that was published last year, by Igor Barbosada Costa, Leandro Balby Marinho and Carlos Eduardo Santos Pires: Forecasting football results and exploiting betting markets: The case of “both teams to score. In the paper they try out different approaches for predicting the probability of both teams to score at least one goal (“both teams to score”, or BTTS for short). A really cool thing about the paper is that they actually used my goalmodel R package. This is the first time I have seen my package used in a paper, so im really excited bout that! In addition to using goalmodel, they also tried a few other machine learning approaches, where insted of trying to model the number of goals by using the Poisson distribution, they train the machine learning models directly on the both-teams-to-score outcome. They found that both approaches had similar performance.

I have to say that I personally prefer to model the scorelines directly using Poisson-like models, rather than trying to fit a classifier to the outcome you want to predict, whether it is over/under, win/draw/lose, or both-teams-to-score. Although I can’t say I have any particularly rational arguments in favour of the goal models, I like the fact that you can use the goal model to compute the probabilities of any outcome you want from the same model, without having to fit separate models for every outcome you are interested in. But then again, goalmodels are of course limited by the particlar model you choose (Poisson, Dixon-Coles etc), which could give less precice predictions on some of these secondary outcomes.

Okay, so how can you compute the probability of both teams to score using the Poisson based models from goalmodel? Here’s what the paper says:

This is a straightforward approach where they have the matrix with the probabilities of all possible scorelines, and then just add together the probabilities that correspond to the outcome of at least one team to score no goals. And since this is the exact opposite outcome (the complement) of both teams to score, you take one minus this probability to get the BTTS probability. I assume thay have used the predict_goals() function to get the matrix in question from a fitted goal model. In theory, the Poisson model allows to an infinite amount of goals, but in practice it is sufficient to just compute the matrix up to 10 or 15 goals.

Heres a small self-contained example of how the matrix with the score line probabilities is computed by the predict_goals() function, and how you can compute the BTTS probability from that.

# Expected goals by the the two oppsing teams. expg1 <- 1.1 expg2 <- 1.9 # The upper limit of how many goals to compute probabilities for. maxgoal <- 15 # The "S" matrix, which can also be computed by predict_goals(). # Assuming the independent Poisson model. probmat <- dpois(0:maxgoal, expg1) %*% t(dpois(0:maxgoal, expg2)) # Compute the BTTS probability using the formula from the paper. prob_btts <- 1 - (sum(probmat[2:nrow(probmat),1]) + sum(probmat[1,2:ncol(probmat)]) + probmat[1,1])

In this example the probability of both teams to score is 56.7%.

In many models, like the one used above, it is assumed that, the goal scoring probabilites for the two oppsing teams are statistically independent (given that you provide the expected goals). For this type of model there is a much simpler formula for computing the BTTS probability. Again, you compute the probability of at each of the teams to not score, and then take 1 minus these probabilities. And the probability of at least one team to score is just the product of the probability of both teams to score. In mathematical notation this is

where X is the random variable for the number of goals scored by the first team, and Y is the corresponding random variable for the second team. The R code for this formula for the independent Poisson model is

prob_btts_2 <- (1 - dpois(0, lambda = expg1)) * (1 - dpois(0, lambda = expg2))

which you can verify gives the same result as the matrix-approach. This formula only works with the statistical independence assumption. The Dixon-Coles and bivariate Poisson (not in the goalmodel package) models are notable models that does not have this assumption, but instead have some dependence (or correlation) between the goal scoring probabilities for the two sides.

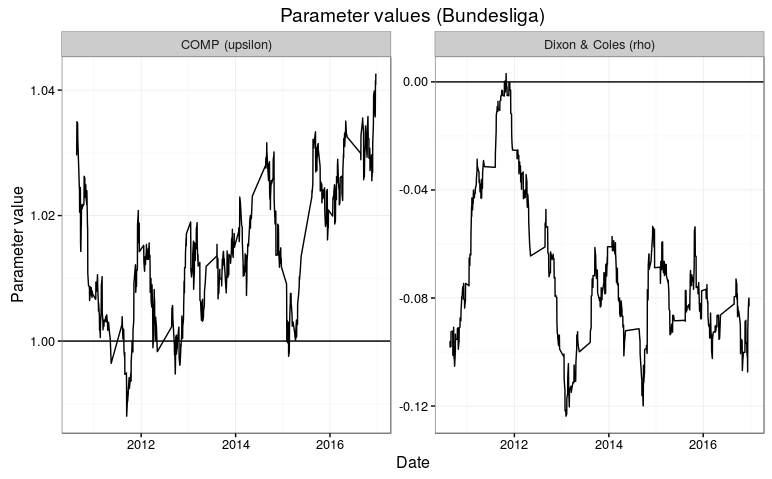

I have also found a relatively simple formula for BTTS probability for the Dixon-Coles model. Recall that the Dixon-Coles model applies an adjustment to low scoring outcomes (less than two goals scored by either team), shifting probabilities to (or from) 0-0 and 1-1 to 1-0 and 0-1 outcomes. The amount of probability that is shifted depends on the parameter called rho. For the BTTS probability, it is the adjustment to the probability of the 1-1 outcome that is of interest. The trick is basically to subtract the probability of the 1-1 outcome for the underlying independent model without the Dixon-Coles adjustment, and then add back the Dixon-Coles adjusted 1-1 probability. The Dixon-Coles adjustment for the 1-1 outcome is simply 1 – rho, which does not depend on the expected goals of the two sides.

Here is some R code that shows how to apply the adjustment:

# Dixon-Coles adjustment parameter. rho <- 0.13 # 1-1 probability for the independent Poisson model. p11 <- dpois(1, lambda = expg1) * dpois(1, lambda = expg2) # Add DC adjusted 1-1 probability, subtract unadjusted 1-1 probability. dc_correction <- (p11 * (1-rho)) - p11 # Apply the corrections prob_btts_dc <- prob_btts_2 + dc_correction

If you run this, you will see that the BTTS probability decreases to 55.4% when rho = 0.13.

I have added two functions for computing BTTS probabilities in the new version 0.6 of the goalmodel package, so be sure to check that out. The predict_btts() function works just like the other predict_* functions in the package, where you give the function a fitted goalmodel, together with the fixtures you want to predict, and it gives you the BTTS probability. The other function is pbtts(), which works independently of a fitted goalmodel. Instead you just give it the expected goals, and other paramters like the Dixon-Coles rho parameter, directly.